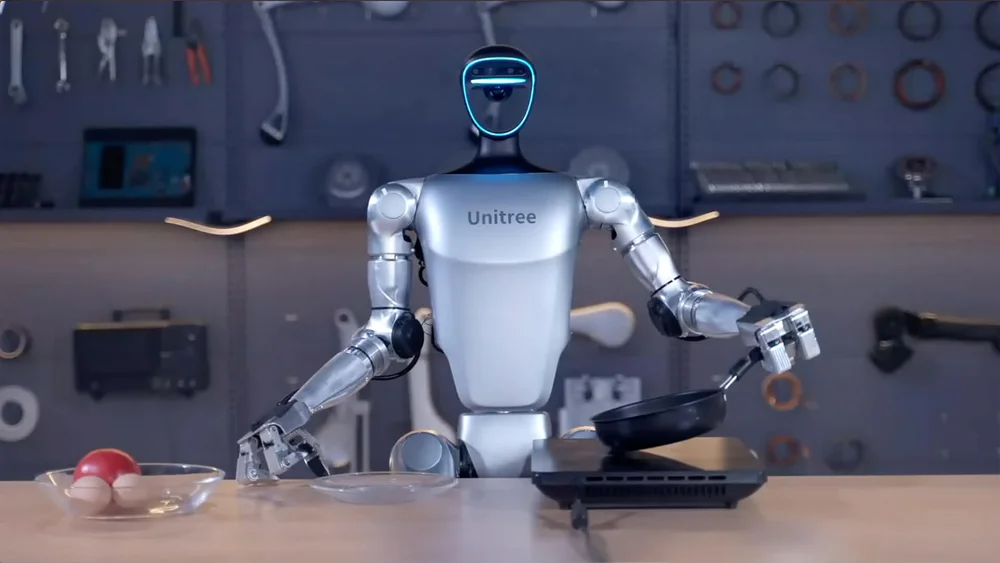

先接入本体,让进化有稳定运行底座

把已经验证过的机器人本体纳入同一套云平台里,让每个自我进化能力都有在线状态、版本控制、训练发布和集群运营作为底座。

这不是四个孤立功能,而是一条自我进化链路:先把机器人本体纳入平台,再建立实时交互、持续培养和数据闭环,让机器人真正稳定上岗。

把已经验证过的机器人本体纳入同一套云平台里,让每个自我进化能力都有在线状态、版本控制、训练发布和集群运营作为底座。

Stardust 捕捉自我进化机器人需要的现场信号:说话者变化、打断、上下文迁移、本地感知和动作反馈。

它让一次性调参变成长期学习,让人设、偏好记忆、岗位技能和服务规范在持续带教里逐渐稳定下来。

自我进化不是口号,而是把线上问题变成诊断、修正��、发布和下一次真实岗位验证的固定闭环。

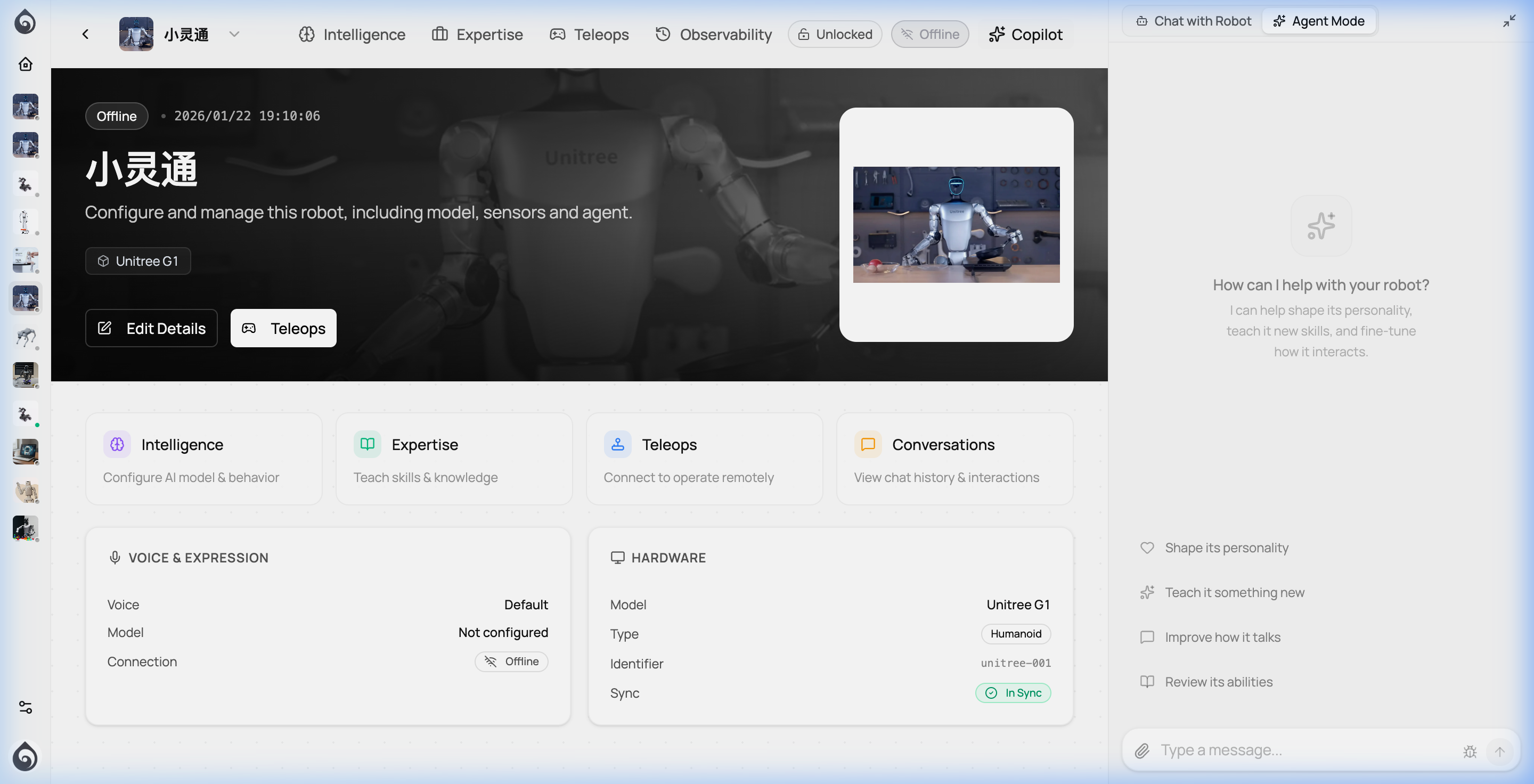

从记忆、感知到技能、安全围栏与多模型路由,Ticos 把机器人上线后持续进化所需的核心能力组织成一个平台。

定义机器人的语气、专业度、亲和力与礼仪规范,让每个机器人都有独特的岗位个性。

毫米级低延迟,让说话、注视、手势与表情自然同步,消除交互的“机器感”。

结合语音、视觉与上下文,准确判断说话者身份、位置与隐含意图。

全链路流式处理,支持即时打断与自然接话,适配高频互动的真实环境。

根据对话流程动态调用导航、预约、查询等业务工具,实现交付结果的交互。

根据任务复杂度自动路由至最合适的 LLM/VLM 模型,平衡成本、速度与效果。

根据上下文自动判断任务难度,灵活匹配轻量或超大模型。

维护多轮对话状态与场景知识,确保服务过程逻辑连贯。

通过标准协议快速扩展机器人技能,对接各类企业业务系统。

多层安全审核与表现锁定,确保机器人在真实岗位上言行合规稳定。

不是只会聊天,而是结合记忆、技能成长与场景规范,在真实服务流程里持续变好。

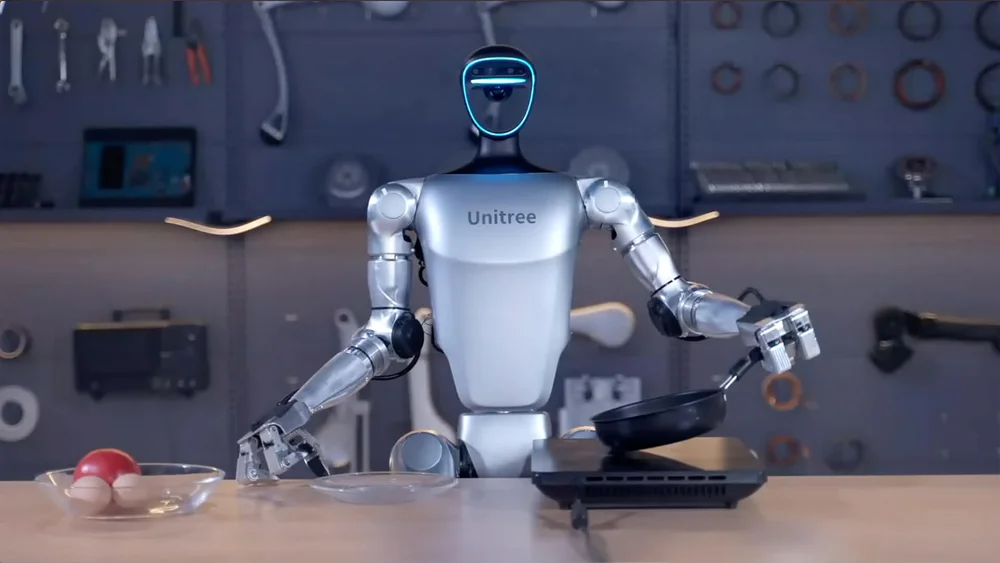

不论是领先的人形平台,还是轻巧的桌面机器人,都能通过 Ticos 获得智力。

深度优化主流硬件控制链路,确保模型指令能够精准、实时转化为物理动作。

全尺寸或紧凑型人形平台,具备全身协调与高交互性。

稳定跨越复杂地形,适用于巡检、导盲与户外随行。

高性价比的室内服务平台,适用于送餐、引导与仓储。

桌面陪伴、教育编程或智能座舱等非移动交互场景。

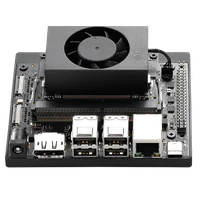

如果您正在自研本体,我们也支持在核心算力板卡上直接运行 Ticos 运行时。

NVIDIA Jetson

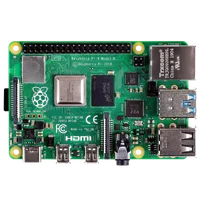

Raspberry Pi

ESP32

Android Board

RTK

连接运行底座,带教岗位能力,再把真实反馈变成下一版更安全、更好用的行为。

通过对话、示范、回放复盘、配置修正和诊断反馈,让机器人在持续运营中形成更稳定的岗位能力。

关于“培养机器人”的几个核心问题。